Re the article, I don't think it holds water either. (A datetime is made of a 16-bit int for the date, then a 16 bit int for the time.)

But even if the server is 32 bit, meaning two machine instructions per datetime, both variants need to check all 64 bits for every datetime where the date matches the constant, and only 32 bits otherwise. SQLkiwi's point that the comparison is atomic is interesting, I thought perhaps a byte-by-byte ">" could give up before the last byte more often than a ">=" could. Sorry to muddy the picture even more, hope this triggers a thought in someone more knowledgable than me.

(I say exceeding 100 because we never waited until the former query finished as it took over 2 hours on a few million rows.) One of the conditions that improved was (don't ask about the business logic - a pretty unique FY change requirement):ĭbo.Trade.TradeDate > (CASE WHEN MONTH(GETDATE()) =" is optimized, but I have sped up a number of reports by a factor exceeding 100 by wrapping getdate() in a simple UDF. We haven't done rigorous tests but it has made several complex reports feasible that simply wouldn't run otherwise. I'm pretty certain I've seen significant performance improvements by replacing ">= x" with "> x-1ms" when nothing else has been changed, in certain cases but not always.

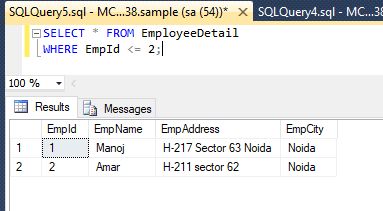

If you choose to run that code yourself, be warned it might take several minutes to create the 10 million row table! INSERT #n (id) VALUES (CONVERT(INTEGER, 4)) ĬREATE INDEX nc1 ON #n (id) INCLUDE (padding) īoth forms of the query complete immeasurably quickly, in 4 logical reads, using an index seek. I doubt this was ever true, frankly, but it certainly isn't today: This optimization is easier with integers, but more difficult with character strings and floating-point data. It is probably much more efficient to write this query like this: If there are many rows where int_col equals 3, the server has to scan many pages to find the first row where int_col is greater than 3. Uses the index to find the first value where int_col equals 3, and then scans forward to find the first value greater than 3. If you are looking for a value greater than or equal to 'x', use >=. I encourage people to write SQL in the most natural form possible, and only tweak it to work around specific (and measurable!) issues. There are also subtle problems around finding the 'next lowest' value for some types like REAL and FLOAT. For example, the code example given would break if we are looking for DATETIME values greater than ''.

There will be no advantage to rewriting GE as GT, and you might even introduce a bug. Where GT represents greater-than and GE represents greater-than-or-equal. For a scan, you will see one of the following XML show plan elements: As far as SQL Server is concerned, the operations are also atomic. That's not the case - even at the microprocessor level, greater than and greater-than-or-equal are implemented as single operations with the same cycle cost. Your colleague is perhaps thinking that >= might require two comparisons (one for equality, and then if that fails, for greater-than). I tested both these queries out and found no difference in performance - however my colleague is still convinced that in certain circumstances the >= operator will perform worse that the > operator.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed